ChatGPT Toolbox for Data Scientists: Organize Code, ML Experiments & Analysis (2026)

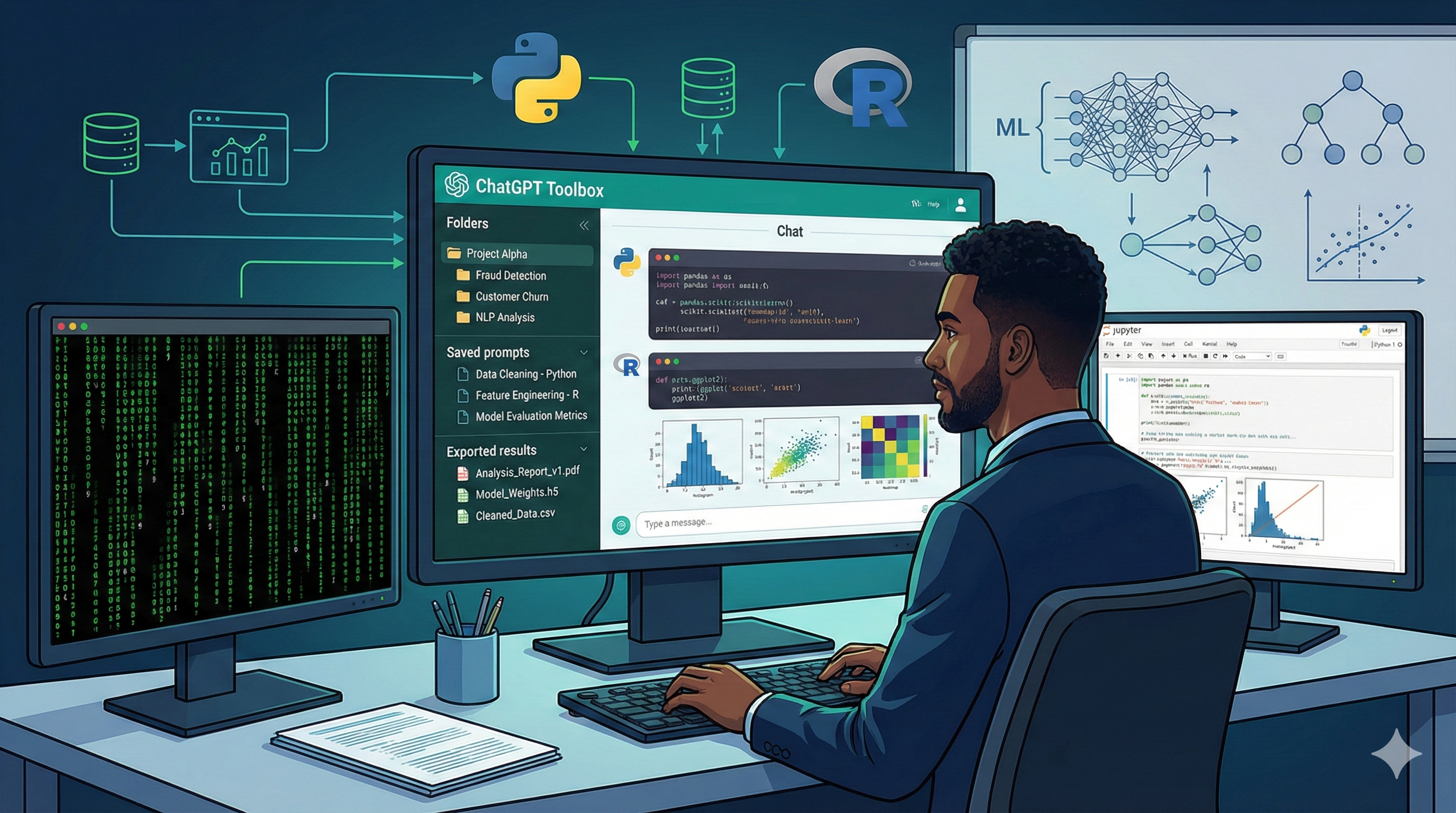

AI Toolbox (formerly ChatGPT Toolbox) is a Chrome extension that adds folders, full-text search, bulk export, and a prompt library to ChatGPT, built for data scientists managing EDA, feature engineering, ML experiments, and multi-language code (Python, R, SQL) across parallel projects. It works with GPT-5.3 Instant, GPT-5.4 Thinking, and GPT-5.4 Pro - the current ChatGPT 2026 lineup. The free Basic plan includes 2 folders, 2 pinned chats, 2 saved prompts, up to 5 search results per query, media gallery, and RTL support. Premium ($9.99/month or $99 one-time Lifetime) unlocks unlimited folders, unlimited full-text search, bulk export to TXT and JSON, prompt chaining, and cross-device sync. With 20,000+ active users and a 4.5/5 Chrome Web Store rating, ChatGPT Toolbox is the organization layer data scientists actually need on top of ChatGPT.

Data science work produces an enormous volume of ChatGPT conversations: EDA on a new dataset, feature engineering experiments, hyperparameter searches, model comparisons, debugging sessions, SQL query optimization, visualization code, and stakeholder communication. A typical data scientist juggling 3-5 active projects can generate 300-500 ChatGPT conversations per month. Native ChatGPT's single chronological sidebar with title-only search becomes unusable almost immediately. This guide shows how to use ChatGPT Toolbox to organize data science work by project and pipeline phase, find past code solutions with full-text search, save reusable code templates in the prompt library, and export ML experiments for reproducibility.

Why Data Scientists Hit ChatGPT's Limits Faster Than Anyone

Data science generates high-volume, high-specificity ChatGPT conversations with reusable code, making organization and full-text search uniquely valuable. Here's where native ChatGPT breaks down:

- Code snippets get buried: You wrote a perfect pandas groupby transformation three weeks ago, and now you can't find it. Native ChatGPT can't search inside messages, only titles.

- Scattered language contexts: Python, R, SQL, Julia, and Bash conversations all sit in one sidebar with no way to filter by language or library.

- No experiment tracking: A single hyperparameter sweep generates 10+ conversations about different algorithms, feature sets, and scoring strategies. They have no structural relationship in native ChatGPT.

- No bulk export for reproducibility: When a manager asks "how did you arrive at this model?", there's no way to extract your AI-assisted methodology from a specific project.

- Repeated prompts everywhere: "Convert this SQL to use window functions", "Optimize this pandas operation for memory", "Explain this regression output" - these are the same prompts every week, reinvented from scratch.

ChatGPT Toolbox adds four missing layers: folders (unlimited on Premium) for project and pipeline organization, full-text search (unlimited on Premium) for finding any past code, prompt library (unlimited on Premium) for reusable templates, and bulk export (Premium) for documentation. The free Basic plan's 2-folder cap is too low for even a single data science project - data scientists need Premium at minimum.

Folder Structures for Data Science Projects

The right folder structure mirrors the data science pipeline: one top-level folder per project, subfolders per pipeline phase, and language-specific organization where relevant. Here are battle-tested templates for common project types:

ML Classification / Regression Project

- [Project Name] / 01 EDA: Exploratory data analysis, distribution checks, missing value analysis, correlation studies

- [Project Name] / 02 Feature Engineering: Encoding, scaling, transformations, feature selection conversations

- [Project Name] / 03 Baseline Models: First-pass algorithms (logistic regression, random forest) to establish a performance floor

- [Project Name] / 04 Advanced Models: XGBoost, LightGBM, neural networks, ensembles

- [Project Name] / 05 Hyperparameter Tuning: Grid search, random search, Bayesian optimization runs

- [Project Name] / 06 Evaluation: Metrics analysis, confusion matrices, SHAP plots, error analysis

- [Project Name] / 07 Deployment: Model serialization, API design, monitoring setup

- [Project Name] / 08 Documentation: Stakeholder communication, model cards, reports

Time Series Forecasting Project

- [Project Name] / Data Prep: Resampling, stationarity tests (ADF, KPSS), missing value imputation for time series

- [Project Name] / Decomposition: Trend, seasonality, residual analysis

- [Project Name] / Classical Models: ARIMA, SARIMA, exponential smoothing conversations

- [Project Name] / ML Models: Prophet, gradient boosting with lag features

- [Project Name] / Deep Learning: LSTM, Transformer-based forecasting

- [Project Name] / Backtesting: Walk-forward validation, cross-validation for time series

- [Project Name] / Production: Forecast generation, monitoring, retraining schedules

Deep Learning Project

- [Project Name] / Architecture: Model design, layer selection, CNN/RNN/Transformer variants

- [Project Name] / Training: Loss functions, optimizers, learning rate schedules, checkpointing

- [Project Name] / Data Pipeline: Augmentation, batching, tf.data or DataLoader setup

- [Project Name] / Transfer Learning: Pre-trained model selection, fine-tuning strategy

- [Project Name] / Evaluation: Validation strategy, test metrics, qualitative analysis

- [Project Name] / Deployment: ONNX, TensorRT, TorchScript, inference optimization

Cross-Project Reference Folders

Beyond per-project folders, create reference folders for reusable knowledge:

- Reference / Pandas Snippets: Groupby operations, merging strategies, window functions, pivot tables

- Reference / SQL Patterns: Complex joins, CTEs, window functions, optimization tricks

- Reference / Visualization: Matplotlib, Seaborn, Plotly recipes you've refined over time

- Reference / Statistical Methods: Hypothesis tests, effect size calculations, power analysis

- Reference / ML Algorithms: Algorithm descriptions, when to use each, hyperparameter defaults

- Reference / Production: MLOps patterns, monitoring, drift detection, CI/CD for ML

Pin the 3-5 conversations you reference most often for instant access. Premium's unlimited pins mean you're not forced to choose between project-level and reference-level pins.

Full-Text Search for Code and Technical Terms

Full-text search is the killer feature for data scientists because code vocabulary is highly specific - you can find any past conversation by searching for library functions, error messages, or algorithm names. Premium unlocks unlimited search results (Basic is capped at 5 per query).

| Search query | What it finds | Use case |

|---|---|---|

groupby transform | Every conversation using pandas groupby().transform() | Finding your optimized version of a common pattern |

LightGBM hyperparameters | Past hyperparameter tuning runs for LightGBM | Reusing proven settings for a similar dataset |

window function LAG | SQL conversations using LAG window function | Finding the SQL pattern for a running-total or comparison query |

ValueError | Every conversation where you hit a Python ValueError | Finding the previous solution to a recurring error |

confusion matrix normalize | Visualization code for normalized confusion matrices | Reusing the plot code for a new classification project |

cross_val_score stratified | Stratified cross-validation setups | Replicating a known-good validation pattern |

Enable exact-match toggle when searching for specific function signatures or error strings. Without it, broader matches surface first. Full-text search typically returns results in under a second even across thousands of conversations.

Prompt Library: Reusable Data Science Templates

The prompt library lets you save high-value data science templates and access them with the // shortcut in any conversation. Premium unlocks unlimited prompts (Basic is capped at 2). Here are the templates data scientists should save first:

| Prompt name | Template | When to use |

|---|---|---|

| eda-init | I have a dataset with [shape] and columns [list]. Generate a standard EDA report: dtypes, null counts, descriptive stats per numeric column, value counts per categorical column, and a correlation matrix for numerics. Use pandas. | Starting EDA on a new dataset |

| feature-engineer | For this dataset [description], suggest feature engineering strategies: encoding options for categoricals, scaling for numerics, transformations for skewed distributions, and interaction features worth exploring. | Moving from EDA to feature engineering |

| baseline-model | Train a baseline [classification/regression] model on this dataset using scikit-learn. Include stratified train-test split, logistic regression, random forest, cross-validation with 5 folds, and evaluation metrics. | Establishing a performance floor |

| explain-results | Explain these model results in plain language for a non-technical stakeholder: [results]. Include what the metric means, why it matters, and 2-3 business implications. | Stakeholder communication |

| debug-error | I'm hitting this error: [error]. Here's my code: [code]. Identify the root cause and suggest 2-3 fixes ranked by likelihood. | Debugging Python, R, or SQL errors |

| sql-optimize | Optimize this SQL query for [Postgres/Snowflake/BigQuery]: [query]. Explain each optimization and the expected performance impact. | SQL query tuning |

| viz-recipe | Create a [chart type] visualization of this data using [matplotlib/seaborn/plotly]: [data]. Include proper labels, legend, color scheme, and publication-quality styling. | Generating visualization code |

Use {{variable}} syntax for dynamic values. For multi-step workflows, chain prompts with .. (Premium prompt chaining). Example chain: eda-init -> feature-engineer -> baseline-model runs the full starter workflow on a new dataset with one command.

Managing 5+ data science projects with parallel experiments?

ChatGPT Toolbox adds folders, full-text search, bulk export, and a prompt library to ChatGPT so your Python, R, and SQL work stays organized. Trusted by 20,000+ users with a 4.5/5 Chrome Web Store rating. Install free.

Bulk Export for ML Experiment Reproducibility

Bulk export (Premium) writes selected ChatGPT conversations to TXT or JSON files, enabling ML experiment documentation, model cards, and reproducibility archives. Data scientists use this for:

- Model cards: Export the conversations that led to a specific model's architecture, hyperparameters, and training decisions as reference documentation alongside the model artifact

- Reproducibility archives: Before deploying a model, export the full experiment folder as JSON for compliance, auditing, and future model comparisons

- Team handoff: When transferring a project to another data scientist, bulk export the project folder so they have the full AI-assisted history of decisions

- Publication supplementary materials: For researchers publishing data science results, export AI-assisted methodology conversations as part of reproducibility disclosures

- Retrospective analysis: Export completed experiments as JSON and feed them into an LLM for cross-experiment meta-analysis (what worked, what didn't)

- Backup: Monthly export of all active project folders as an archive layer beyond ChatGPT's own data retention

TXT format is best for human-readable sharing (team reviews, stakeholder reports). JSON format is best for programmatic analysis and long-term storage. Bulk export is not available on the free Basic plan - it requires Premium.

Cross-Device Sync for Work, Home, and Travel

Data scientists typically work across a work laptop, home desktop, and sometimes a personal cloud workstation. Cross-device sync (Premium) keeps folder structures, saved prompts, and settings consistent everywhere.

Without sync, your carefully-organized "01 EDA / 02 Feature Engineering / 03 Models" folder structure exists only on the machine where you created it. Moving to a different workstation means losing the organization or rebuilding it manually. Premium's sync eliminates that friction, so you can start an EDA on your office desktop and continue seamlessly on your home laptop at night.

Time Savings Calculation for Data Scientists

A data scientist running 30+ ChatGPT conversations per week saves an average of 45 hours per year with Premium, worth $3,375 at a $75/hour rate. Here's the per-task breakdown:

| Task | Time saved/week | Annual hours saved | Annual value ($75/hr) |

|---|---|---|---|

| Finding past code solutions (full-text search) | 15 min | 13 hrs | $975 |

| Reusing pandas, SQL, and viz snippets | 20 min | 17.3 hrs | $1,300 |

| Organizing by project and pipeline phase | 10 min | 8.7 hrs | $650 |

| Prompt library for EDA and modeling templates | 15 min | 13 hrs | $975 |

| Bulk export for model documentation | 30 min/month | 6 hrs | $450 |

| Cross-device sync eliminates rebuilding | 5 min | 4.3 hrs | $325 |

| Total | ~65 min/week | ~62 hrs/year | $4,675 |

Premium at $119.88/year delivers 3,800% ROI. Premium Lifetime at $99 one-time delivers infinite ROI after year one and breaks even in 8 days. See our full ROI calculator for other professional profiles.

Plan Comparison for Data Scientists

Data scientists running ML projects need Premium - the Basic plan's 2-folder cap blocks organizing even a single project by pipeline phase.

| Feature | Basic (Free) | Premium ($9.99/mo) | Premium Lifetime ($99 once) |

|---|---|---|---|

| Folders & subfolders | Up to 2 folders | Unlimited | Unlimited |

| Pinned chats | Up to 2 | Unlimited | Unlimited |

| Saved prompts | Up to 2 | Unlimited + chaining | Unlimited + chaining |

| Full-text search | Up to 5 results per query | Unlimited | Unlimited |

| Bulk export (TXT/JSON) | Not included | Included | Included |

| Cross-device sync | Not included | Included | Included |

| Media gallery (for generated plots) | Included | Included | Included |

| Money-back guarantee | N/A | Cancel anytime | 14-day |

Recommendation: Start with Premium monthly for 1-2 months to validate the workflow. Upgrade to Premium Lifetime once you're confident - the $99 one-time pays for itself in 10 months versus monthly and saves $500+ over 5 years. Teams of 5+ data scientists should evaluate the Enterprise plan ($12/seat/month or $10/seat/year) for admin dashboard, centralized billing, and seat management.

Best Practices for Data Science Workflows

Follow these best practices to maximize productivity with ChatGPT Toolbox for data science work:

- Never paste sensitive data: ChatGPT Toolbox is local-first and GDPR compliant, but ChatGPT itself receives whatever you send. Anonymize datasets, use synthetic examples, and follow your organization's AI usage policies for real data.

- Pick the right ChatGPT model: Use GPT-5.3 Instant for quick code generation and everyday questions, GPT-5.4 Thinking for debugging tricky bugs and complex ML design, and GPT-5.4 Pro for long-running agentic coding sessions on hard problems. See our ChatGPT models guide.

- Version your prompts: When you refine a template, rename it with a version suffix (

eda-init-v2). Keep old versions for comparison or rollback. - Document assumptions in the conversation: Don't just paste code - explain what the data looks like, what the target is, and what's known. Future you searching for this chat will thank present you.

- Use prompt chaining for pipelines: For recurring multi-step workflows (EDA -> features -> baseline), build a chain that runs all steps automatically.

- Export before archiving: When you finish a project, bulk export the folder as JSON before moving it to Archive. Gives you a clean, portable artifact.

- Pin the "how do I..." references: The 3-5 conversations you consult most often (e.g., "how to normalize a confusion matrix in seaborn") should be pinned for instant access.

- Review and curate monthly: Set a calendar reminder to review your folder structure every month. Move completed projects to Archive and delete chats you no longer need.

Frequently Asked Questions

Can I trust ChatGPT-generated code for production data science?

No. Always review, test, and validate AI-generated code before production deployment. ChatGPT (including GPT-5.4) still hallucinates library function signatures, uses deprecated APIs, and makes subtle errors on edge cases. Use ChatGPT for productivity - prototyping, brainstorming, initial drafts - but apply standard code review, unit tests, and validation before deploying to production. Save reviewed code in the ChatGPT Toolbox prompt library so future prototypes start from known-good patterns.

How does ChatGPT Toolbox work with Jupyter notebooks or VSCode?

ChatGPT Toolbox is a Chrome extension for chatgpt.com specifically - it doesn't integrate directly with Jupyter or VSCode. The workflow is: brainstorm and generate code in ChatGPT (organized by ChatGPT Toolbox), copy final code into your Jupyter notebook or IDE, and use full-text search in ChatGPT Toolbox to retrieve past solutions when you hit similar problems in the future. Think of ChatGPT Toolbox as your personal "AI conversations database" that complements your IDE workflow.

Should I use GPT-5.3 Instant or GPT-5.4 Thinking for data science work?

Use GPT-5.3 Instant as your default for everyday tasks: generating pandas code, writing SQL queries, explaining statistical concepts, and creating visualizations. Switch to GPT-5.4 Thinking for harder tasks: debugging tricky bugs, designing ML pipelines, analyzing model failures, and interpreting complex results. GPT-5.4 Pro is worth it for long-running agentic work like multi-step data cleaning pipelines or complex feature engineering that benefits from sustained reasoning. See our ChatGPT models guide for the full 2026 lineup.

Is my code secure when using ChatGPT Toolbox?

ChatGPT Toolbox stores folder structures, prompts, and organization metadata locally in your browser. No data is sent to ChatGPT Toolbox servers. Cross-device sync (Premium) uses encrypted sync, still respecting the local-first model. However, the code and data you send to ChatGPT itself is governed by OpenAI's policies - never paste proprietary code, credentials, or personally identifiable data into ChatGPT. Use synthetic examples or anonymized snippets for real work.

Can I share my ChatGPT Toolbox folders with my team?

ChatGPT Toolbox organizes individual ChatGPT accounts. For team collaboration, use bulk export to share specific folders or conversations as TXT or JSON files. For organizations deploying ChatGPT Toolbox to 5+ data scientists, the Enterprise plan adds admin dashboard, centralized billing, seat management, and team analytics - $12/seat/month or $10/seat/year with a minimum of 5 seats.

How do I keep code templates up-to-date as libraries change?

Set a quarterly review reminder to check saved prompts and pinned conversations against current library versions. When you discover an improved approach (new pandas syntax, better scikit-learn API, faster algorithm), update the template and archive the old version with a version suffix. Use bulk export to back up old versions before overwriting. This keeps your prompt library current without losing history.

Does ChatGPT Toolbox help with Kaggle competitions?

Yes. Kaggle workflows involve iterating quickly on EDA, feature engineering, and model experimentation - exactly the high-volume conversation pattern where ChatGPT Toolbox shines. Create a folder per competition, subfolders per model variant, and use full-text search to find past feature engineering tricks across competitions. Many Kagglers report the prompt library alone saves 1-2 hours per competition by eliminating repeated setup questions.

Is the free Basic plan enough to try ChatGPT Toolbox for data science?

The Basic plan (2 folders, 2 pins, 2 prompts, 5 search results per query) is enough to test the extension for a single small project. For any real data science workflow, you'll immediately hit the caps. Either accept the 2-folder limitation for a trial week, or jump straight to Premium monthly ($9.99/month, cancel anytime) to evaluate the full feature set. Most data scientists upgrade within the first week.

Bottom Line

Data science generates 300-500 ChatGPT conversations per month across EDA, feature engineering, modeling, debugging, SQL optimization, and documentation. ChatGPT's native sidebar can't handle that volume, and code vocabulary is too specific for title-only search. ChatGPT Toolbox adds folders, unlimited full-text search, prompt library, and bulk export specifically for the high-volume technical workflow data scientists actually run. The free Basic plan is too limited (2 folders max) for any real project, so Premium at $9.99/month or Premium Lifetime at $99 one-time is the minimum for serious data science use. Premium Lifetime is the right pick for most data scientists - it pays for itself in 8 days of saved code search time and saves $500+ over 5 years versus monthly billing. Install ChatGPT Toolbox, set up one project folder with the pipeline-phase template above, and reclaim 60+ hours per year currently lost to scrolling, re-asking, and rebuilding.

Related guides:

- ChatGPT Toolbox for Programmers

- ChatGPT Toolbox for Researchers

- ChatGPT Toolbox Pricing & ROI Calculator

- ChatGPT Models Explained (2026)

- Advanced Search Feature Guide

- Prompt Chaining Guide

Last updated: May 9, 2026